A new data format for LLMs called TOON (Token-Oriented Object Notation) has been getting some crazy attention in the last couple of weeks.

First - I think it’s a very cool concept and it looks like there’s an impressive engineering effort in a very short time here.

And having said that - I think it’s worth trying to understand what are the use cases where TOON is actually the best option.

(I’m not saying anything that doesn’t appear in the TOON repo itself, btw - just trying to take a closer look at a fairly over-hyped situation)

What is TOON?

From the repo:

Token-Oriented Object Notation is a compact, human-readable encoding of the JSON data model for LLM prompts. It provides a lossless serialization of the same objects, arrays, and primitives as JSON, but in a syntax that minimizes tokens and makes structure easy for models to follow.

The basic idea sounds very good: Send the same info as JSON, but it’ll use fewer tokens and it won’t lose accuracy.

The high-level benchmarks on the README look promising, too:

TOON ████████████████████ 26.9 │ 73.9% acc │ 2,744 tokens

JSON compact █████████████████░░░ 22.9 │ 70.7% acc │ 3,081 tokens

YAML ██████████████░░░░░░ 18.6 │ 69.0% acc │ 3,719 tokens

JSON ███████████░░░░░░░░░ 15.3 │ 69.7% acc │ 4,545 tokens

XML ██████████░░░░░░░░░░ 13.0 │ 67.1% acc │ 5,167 tokens

Should you use TOON?

But…

These are aggregate results.

The question you should ask yourself is not:

“Is TOON better than other formats on average?”

But rather:

“Is TOON better than others for my specific use case?”

Or more generally, as an industry:

“What are the use cases for which TOON is the best choice?”

What do the benchmarks show?

The TOON repo doesn’t pretend it’s a perfect match for everything:

TOON’s sweet spot is uniform arrays of objects (multiple fields per row, same structure across items).

It also discusses

- The format’s similarity to CSV (and it really is very similar when the data is tabular)

- A useful list of “When Not to Use TOON”, which mentions, for example, that purely tabular or highly nested data have better alternatives.

Several benchmarks are provided in the docs.

My take from them is that indeed, there are limited use cases where TOON appears to be the best option.

Tabular data: CSV

Here are a couple of examples:

Uniform employee records

| Format | Accuracy | Tokens | Correct/Total |

|---|---|---|---|

csv |

72.0% | 2,352 | 118/164 |

toon |

73.8% | 2,518 | 121/164 |

Time-series analytics data

| Format | Accuracy | Tokens | Correct/Total |

|---|---|---|---|

csv |

73.3% | 1,406 | 88/120 |

toon |

72.5% | 1,548 | 87/120 |

These are pretty close.

CSV is more compact, and the accuracy difference isn’t significant either way (and probably depends on the model).

Larger complex data: Compact JSON

Semi-uniform event logs

| Format | Accuracy | Tokens | Correct/Total |

|---|---|---|---|

json-compact |

63.3% | 4,819 | 76/120 |

toon |

57.5% | 5,799 | 69/120 |

Cases where TOON was better:

Deeply nested configuration

On the one hand, this is a very interesting scenario, because it’s really free-form data, and TOON’s accuracy outperforms all other formats.

On the other hand, this is really small data. One configuration sample of less than 1,000 tokens.

So it’s difficult to know whether or not this will be consistent.

And also - when it’s this small, the token savings aren’t that significant.

| Format | Accuracy | Tokens | Correct/Total |

|---|---|---|---|

json-compact |

92.2% | 574 | 107/116 |

toon |

95.7% | 666 | 111/116 |

yaml |

91.4% | 686 | 106/116 |

json-pretty |

94.0% | 932 | 109/116 |

xml |

92.2% | 1,018 | 107/116 |

E-commerce orders with nested structures

This is the sweet spot mentioned in the docs.

If this is your use case, TOON looks promising.

| Format | Accuracy | Tokens | Correct/Total |

|---|---|---|---|

toon |

81.1% | 7,232 | 133/164 |

json-compact |

76.8% | 6,794 | 126/164 |

To give a concrete sense, this is the structure of each of the orders:

export interface Order {

orderId: string

customer: {

id: number

name: string

email: string

phone: string

}

items: {

sku: string

name: string

quantity: number

price: number

}[]

subtotal: number

tax: number

total: number

status: string

orderDate?: string

createdAt?: string

}

What can we learn from this?

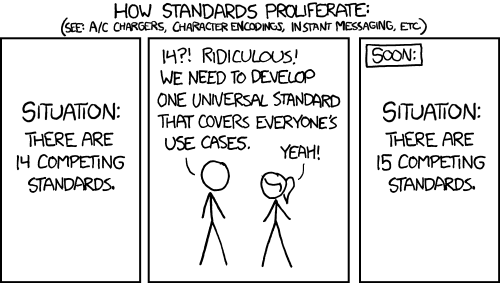

From an industry perspective, I can’t help but wonder if the improvement here really justifies another format. This immortal xkcd always makes a good point:

But putting that aside, the bottom line is that there are a couple of use cases where TOON shows promise - but it’s not the best solution in the most common cases (and it’s not claiming to be).

What should you do?

I’d say these are the defaults:

┌──────────────────────────────────────────────────────────────────┐

│ Is structured data tokens/accuracy actually a bottleneck for you?│

└────────────────┬─────────────────────────────────────────────────┘

│

┌────────┴────────┐

│ │

No Yes

│ │

▼ ▼

┌─────────────┐ ┌─────────────────────────┐

│ Don't worry │ │ What's your data shape? │

│ about it │ └───────────┬─────────────┘

└─────────────┘

│

┌───────────┼───────────┬──────────────┐

│ │ │ │

▼ ▼ ▼ ▼

┌───────────┐ ┌──────────┐ ┌────────────┐ ┌──────────┐

│ Tabular │ │ Highly │ │ Arrays of │ │Free-form │

│ data │ │ nested │ │ objects │ │ complex │

│ without │ │ data │ │ (not flat, │ │ data │

│ nesting │ │ │ │not deeply │ │ │

│ │ │ │ │ nested) │ │ │

└─────┬─────┘ └────┬─────┘ └─────┬──────┘ └────┬─────┘

│ │ │ │

▼ ▼ ▼ ▼

┌─────────┐ ┌──────────┐ ┌───────────┐ ┌────────────────┐

│ CSV │ │ Compact │ │ Consider │ │ Maybe TOON, │

│ │ │ JSON │ │ TOON │ │ test carefully │

└─────────┘ └──────────┘ └───────────┘ └────────────────┘

And either way:

- Test the make-sense alternatives on your actual data!

- Balance the improvements against the complexity of adding another format to your stack.