What an AI Feedback Loop Looks Like

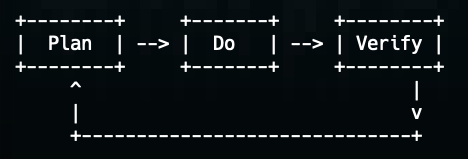

this post is part of a series about creating production-grade maintainable AI-first projects, using AI-first design patterns and frameworks. In the previous post I mentioned that an internal AI feedback loop will be central to all our AI-first design patterns. But “AI feedback loop” might mean different things to different people - so this lightweight post focuses on giving an example to make it concrete. We will implement a small (but realistic) project. The project is set up so the agent has an internal feedback loop - it has instructions that tell it to use a loop, and it has a clear way to create effective tests and run validations (the tests it creates, type-checking, linter). We’ll see how it makes mistakes, finds them and self-heals. ...