Here it is in PyCon US 2023

The root cause of many testing problems is improper test scope, i.e. that their boundaries aren’t appropriate.

Test a cohesive whole - complete story

My approach here is that a test should verify a cohesive whole, a “complete story”.

It can be a large story like an e2e test or a small story that’s part of a bigger story, like a custom sorting function

that something else uses.

As long as it’s something self-contained - something whole, it might be worth testing.

It’s very close to the notion of “testing implementation instead of behavior”, but I find that this phrasing is more useful.

Comparing two alternative test suites

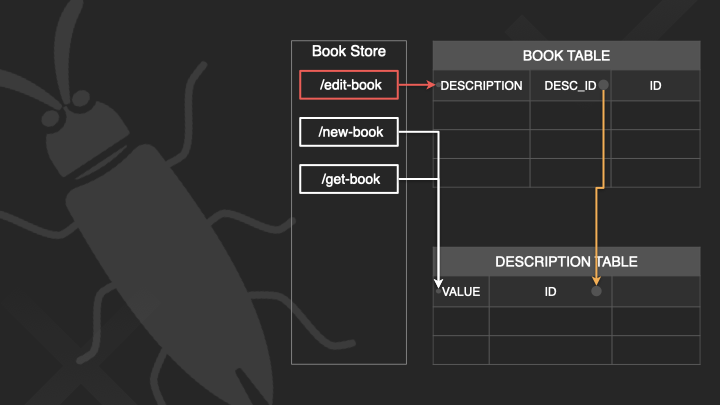

Let’s say we’re building a Book Store web service, and it uses a DB.

┌─────────────────────┐ ┌─────────────────────┐

│ │ │ │

│ Book Store │ --> │ MySQL │

│ │ │ │

└─────────────────────┘ └─────────────────────┘

We’ll do a small thought experiment.

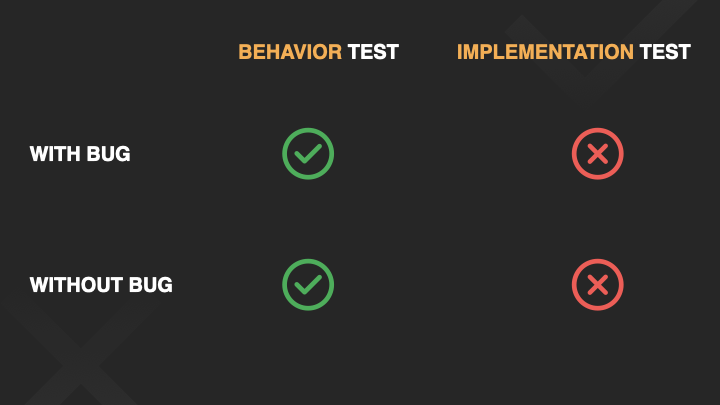

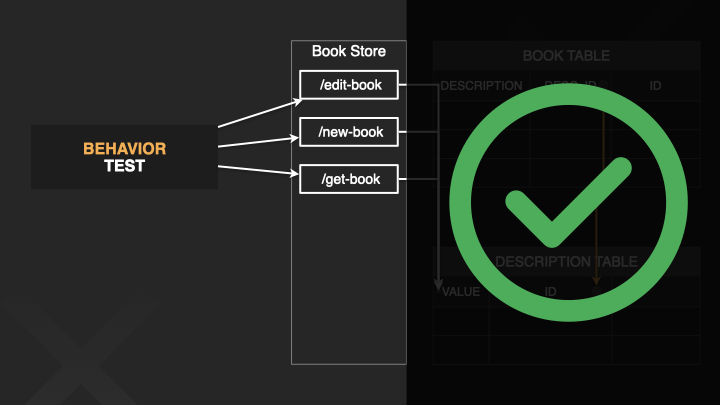

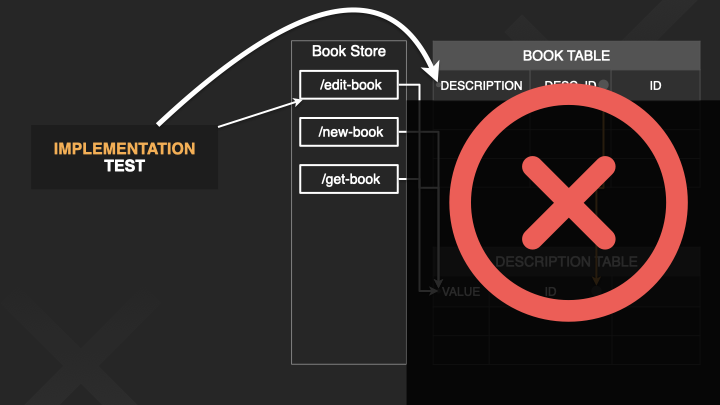

We will consider two alternative test suites - “behavior tests” and “implementation tests”.

We will take a possible code change, and we’ll imagine how one of the tests is going to behave - once if there was a bug, and

once if everything was correct.

We will try to imagine what our life will look like if we would have chosen one test suite or the other.

And we’re going to see that in all cases - it’s the behavior test that gives us what we want.

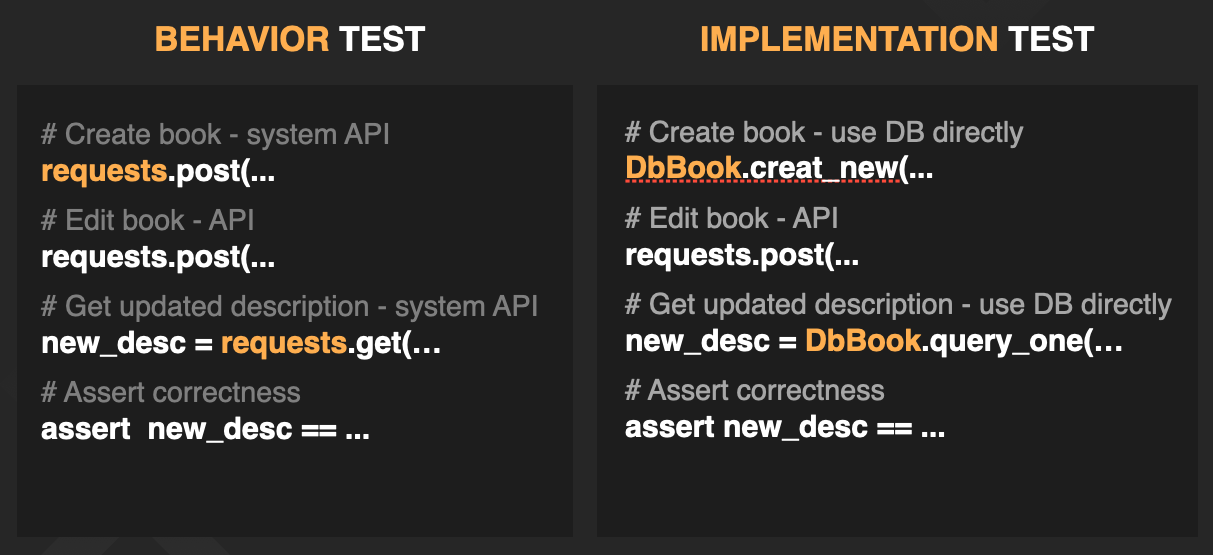

We’ll look at an almost identical test in both test suites.

The test verifies that if we edit the description of a book, then it has really been updated.

Pretty simple.

Both tests have the same flow -

- Create a book

- Edit the book

- Get the updated description

- Make the assertion.

The behavior test does everything through the external http API, IN THE SAME WAY things would be done in the actual system.

The implementation test does some of the things at a lower level. It:

- Creates the book by directly creating a record in the database

- Checks the updated description through the DB.

So the behavior test only looks at the WHAT - It looks at things as they appear from outside.

The implementation test also knows about HOW. It knows how the code will change the DB.

Now, checking the implementation like this will USUALLY be equivalent to the behavior - but not always.

But why does this matter to us?

Our scenario

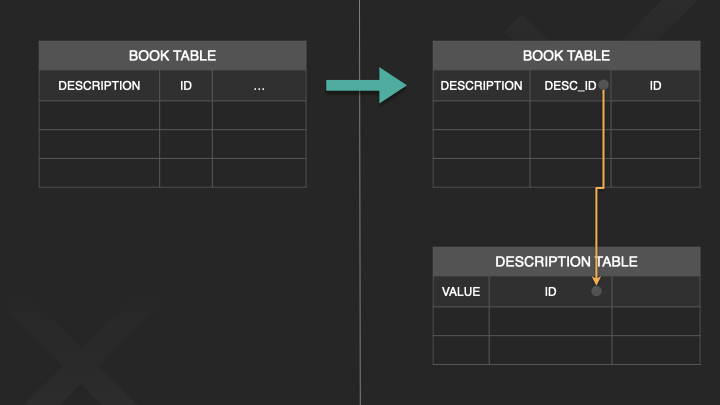

Let’s look at a possible scenario:

We’ve had this test suite for a while, maybe even years.

We’ve invested a lot in them, and we rely on them.

And today, we’re making a change to optimize the database.

We’re moving the description out of the Book table, and into a separate table.

However, we’re not deleting the old field yet - we’ll do that later after all the data has moved to the new table.

Let’s say we’re finished with everything else - and it’s time to update the edit-book endpoint.

We’ll check what happens if we created a bug, and if we did everything correctly.

What if we created a bug?

Now, what if we just FORGOT to update the edit-book endpoint?

Completely forgot.

The edit-book endpoint now changes the wrong field in the database so behavior-wise, it doesn’t do anything.

If this gets to production, then we created a major bug :(

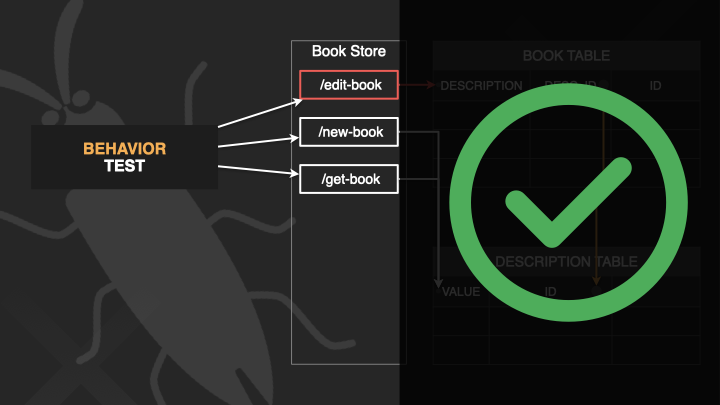

Bug + behavior tests -> good

If we chose behavior tests -

Since the test only uses the external API, it does not care about implementation details.

So if the behavior is wrong, the test will fail, just like it should.

The regression bug was prevented.

Everything’s ok.

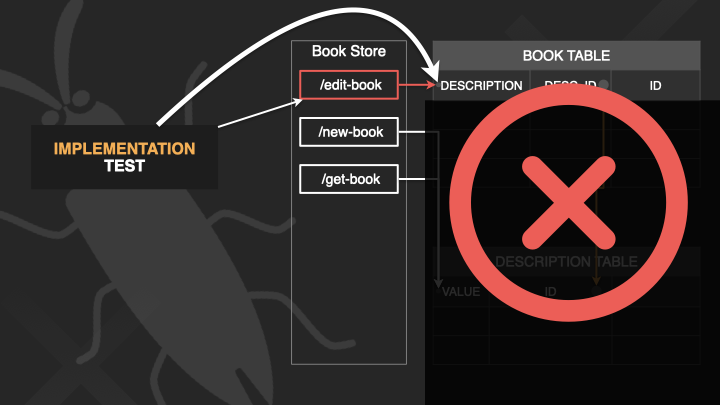

Bug + implementation tests -> not good

But we chose the implementation test - it looks directly at old description field in the DB.

When we run the test, the old description field will change, just like before, so the test will not fail.

The regression bug was not prevented. And a major bug made it to production.

It’s not ok.

What if we did everything correctly and there’s no bug?

On the other side of this, what if we made the change correctly?

Edit-book now changes the new table instead of the old field.

No bug, everything’s fine.

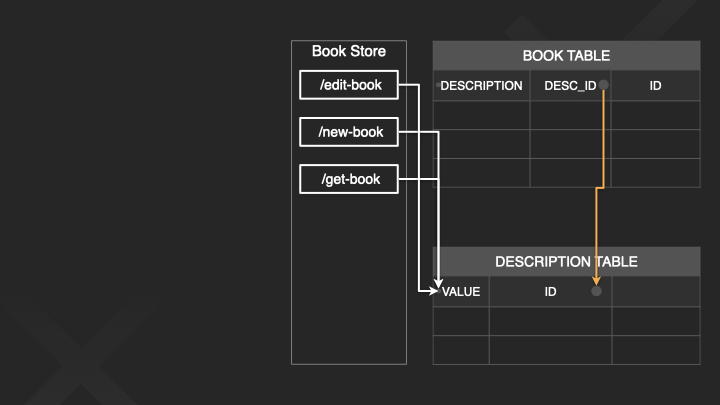

No bug + behavior tests -> good

If we chose the behavior test -

Everything behaves correctly when you just invoke the external endpoints, so the test will pass.

We don’t need to do anything.

No bug + implementation tests -> not good

If we chose the implementation test - The old field is not updated any more, so even though the code is correct, this test will fail.

The distinction here is that the failure reason is not that the code is not correct.

The test fails because it has become technically invalid.

So, we have extra work - we need to figure out whether the failure is real or technical.

And then we’ll need to update the test.

Also - because we just changed the test, we now have less confidence in it. We need to learn to trust it again.

This is worse on large code bases

On large code bases, this can become a real pain.

You have to update the tests, even if the code change has no bugs, and sometimes even if the test has nothing to do with the feature you worked on.

You end up wasting hours and you hate the test suite.

Summing up our thought experiment

We can see that in every case we looked at - the behavior test was much better.

Cohesive, behavior tests are closer to reality.

They are better at protecting us.

They create less redundant work.

And we have higher confidence in them in the long run.

What about big changes?

One more thing worth mentioning: we looked at an example of a small, incremental change.

But sometimes, we need to make BIG changes. SCARY changes.

It happens less often but when it happens it’s a big deal.

Large DB changes are a good example:

In many companies, at some point, the DB doesn’t deal with the scale well.

We get stability issues, and we need to make a big change - maybe even move some of the data to a different type of database.

That’s when tests are MOST important.

And if we went with behavior level tests - everything will be fine.

Those same tests that we’ve been running with for 3 years now - we don’t change them.

When they pass, they give us a very strong indication that the logical behavior remains intact.

But if we went with Implementation level tests - they all become technically invalid and they all fail.

We will need to spend time and effort porting all of them to use the new database.

But FAR more importantly: because we’re changing them - we’re not going to trust them enough.

WE WILL TEST EVERYTHING FROM SCRATCH.

This might make the difference between a project that takes a few weeks, and a company-level event that drags out for months while the product has stability issues.

Conclusion

I cannot recommend enough:

Test behavior.

A cohesive whole, a complete story.